Introducing “Flowlytics Filtering Service”

What’s new with Flowlytics?

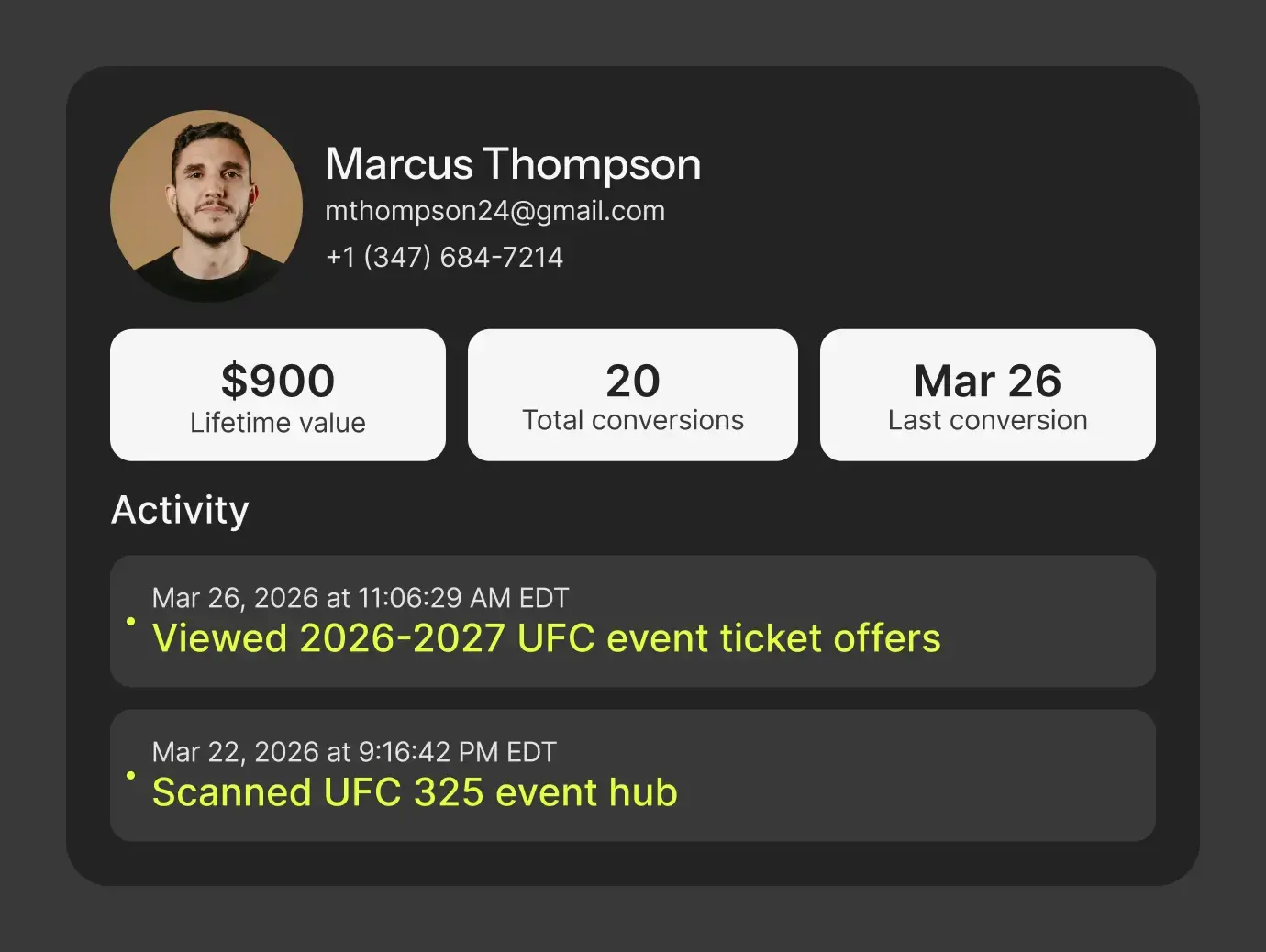

We’ve just released the Flowlytics Filtering Service: a new initiative in our Flowcode Analytics data reporting platform. This latest feature will filter out ‘bot traffic’ from our reporting data to ensure that all the scans and click traffic you see on your portal comes from humans.

Let’s take a step back – why does this matter? Significant amounts of internet traffic can come from non-human automated sources, otherwise known as ‘bots’. In the interest of providing our customers with the most reliable scan and click data, we’ve created this automated system to monitor online traffic sources to Flowcodes and Flowpages. This system parses through all the traffic to filter out any ‘non-human’ sources from your analytics dashboard.

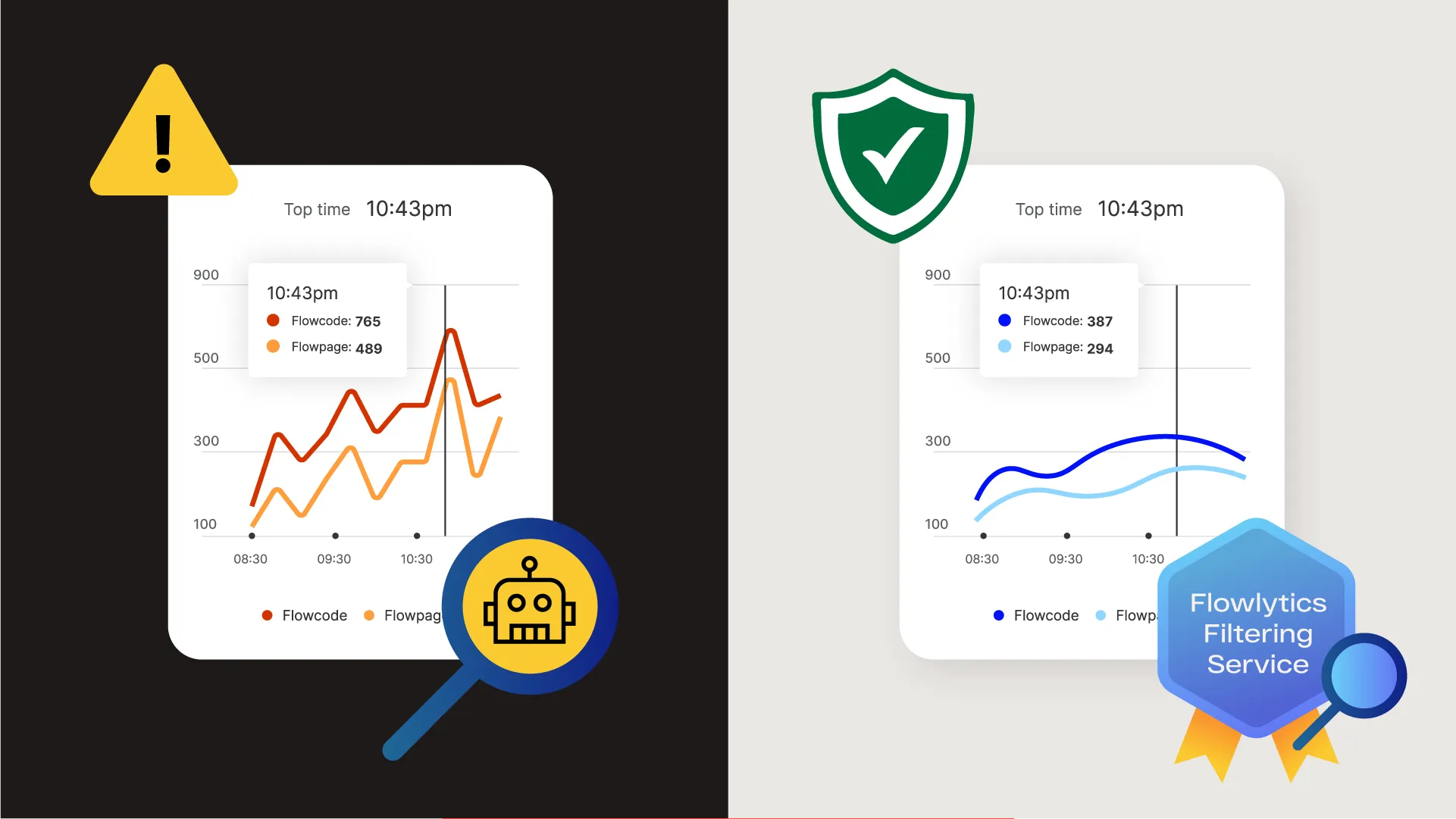

What does this mean for you as a Flowcode user? As a result of this update, you might see a drop in your past and future Flowlytics numbers (scans, clicks, or other impressions). Through our analysis, we estimate most customers won’t notice a significant drop in overall historical or incoming impressions. However, a small subset of users or specific Flowcodes and Flowpages have been disproportionately hit by bot traffic, so this drop may be substantial.

Why is this update important?

Flowcode prioritizes our platform integrity above all. A significant aspect of this is ensuring we provide you with reliable data by filtering the traffic sources your page scans, views, and clicks originate from. As a Flowcode user, you know that data tells powerful stories and drives important decisions. However, data becomes much less helpful when it’s diluted by extra noise. Through the Flowcode platform, you’re seeking to track engagement from customers such as: how many people scanned, clicked, and converted, from your campaign efforts in order to successfully attribute your marketing spend and learn about your customers. Our new Flowlytics Filtering Service ensures your Flowlytics Data reports deliver the most reliable and relevant data so that you can gather better insights.

Here are a couple of examples. Imagine you see a huge spike in pageviews, but no significant increase in your click rates. Alternatively, you might see a significant jump in clicks on a specific day, without any clear changes in your strategy or placement to explain it. Significant variance in your data reports such as these can make it difficult for you and your team to accurately identify your best performing Flowcodes or Flowpages, or may lead you to draw the wrong conclusions about their success with unreliable information.

The filtering service process is part of our effort to ensure your data is as accurate as possible so that you can better understand the trends within it. In short, automatically filtering out unhelpful “noise” from your data empowers you to gather more robust insights from our reporting platform.

What are ‘bots’? How do you identify them?

As we mentioned, traffic to your Flowcodes or Flowpages may be coming from non-human sources such as bots, which can falsely inflate your data reports. A bot is preprogrammed software that runs automated tasks based on algorithms, often to imitate human actions such as scanning, clicking, and browsing. Bots aren’t inherently bad, but exist across search engines and browsers for various reasons: informing search rankings, identifying malicious content, and more. Bots include web crawlers, scrapers or other automated tools created by browsers, applications, and other online users. Today, they account for over half of total internet traffic.

How do we distinguish between actions from a “bot” and a human? User Agents.

Whenever a Flowcode is scanned or a Flowpage is viewed, these events are associated with a http request that comes from that user's device which our team can assess. This enables the Flowcode team to analyze the header details of these distinct http requests that provide information about the source that initiated an action. User Agents are strings of text that are a component of these http request headers. User Agents act like digital IDs, a block of text that provides details about the source of the internet traffic and clues about who could be behind it. Simply put, these User Agents are a tool that gives us information about where a click, scan or pageview event comes from.

Common Bot Traffic Identifiers

User Agents are a useful built-in tool within http request headers that helps our team figure out the details behind device activity to ultimately separate automated traffic from humans. However, User Agents don’t have a highly standardized format, so they can display in several different ways that make spotting the difference difficult. Fortunately, our team has identified several key patterns within a user agent that help us to identify it as bot or not.

We consider an interaction with a Flowcode or Flowpage to be bot traffic if the User Agent connected to that request meets one or more of the following criteria:

1. Self Disclosure: The User Agent clearly communicates itself as a bot and provides an overt explanation of its purpose and behavior

The easiest bots to identify provide a link in the User Agent with an explanation of a bot’s source and purpose. Examples:

- Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) - Google search engine crawler user agent

- Mozilla/5.0 (compatible; BLEXBot/1.0; +http://webmeup-crawler.com/) - user agent associated with WebMeUp, a common backlink checker bot

2. Contains Keyword Identifiers: There are clear ‘bot’ related keywords within the User Agent

Even if a bot’s User Agent does not overtly provide a page explaining its purpose, many contain obvious keywords such as “bot”, “crawler” or “spider” that clearly indicate it’s a bot. Examples:

- Screaming Frog SEO Spider/9.2 - bot that audits a site for SEO performance issues

- pr-cy.ru Screenshot Bot - bot designed to take page screenshots for various purposes

3. Identifies as a ‘headless browser’: The User Agent has descriptors revealing it is a screenless browser

A ‘headless browser’ is a screenless browser run by code without a User Interface or any graphical display. These are typically used to replicate the human experience of browsing the internet for testing or scraping purposes. Examples:

- Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) HeadlessChrome/85.0.4182.0 Safari/537.36

- Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) HeadlessChrome/104.0.5109.0 Safari/537.36

4. Contains programming language: The User Agent contains programming language rather than a device type

This is a clear giveaway that an http request comes from a code script instead of a human operating a device. Examples:

- Go-http-client/2.0

- curl/7.68.0

5. Is publicly recognized: The User Agent belongs to a known web crawler or scraper

Some of the user agents in our data we have identified as bots not because they match any of the criteria above, but because they can be found in open source lists of common user agents, such as this npm package or this online user agent database. Examples:

- RSSOwl/2.2.1.201312301314 (Windows; U; en)

- WhatsApp/2.2114.9 N

Ultimately, you’ve partnered with Flowcode because we enable you to capture first party customer data insights for your business. The Flowlytics Filtering Service is the latest update in improving our platform to provide your team with the most reliable data possible to inform marketing attribution and drive decisions.

The Flowlytics Filtering Service for both historical and future data took effect on 02/23/2023.

Have more questions about this feature? Connect with us at [email protected].

Want to check out your dashboard?

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

%20copy%203.png)